At PEKAT VISION, our focus has always been on deep learning–based machine vision for industrial quality inspection. But when we recently introduced a broader range of Datalogic machine vision products on our website, many customers asked:

“How do I know which solution is right for my application?“

To make it easier, we’ve put together a short Q&A covering the most common questions — from deep learning vs. rule-based approaches to camera choices and processor hardware.

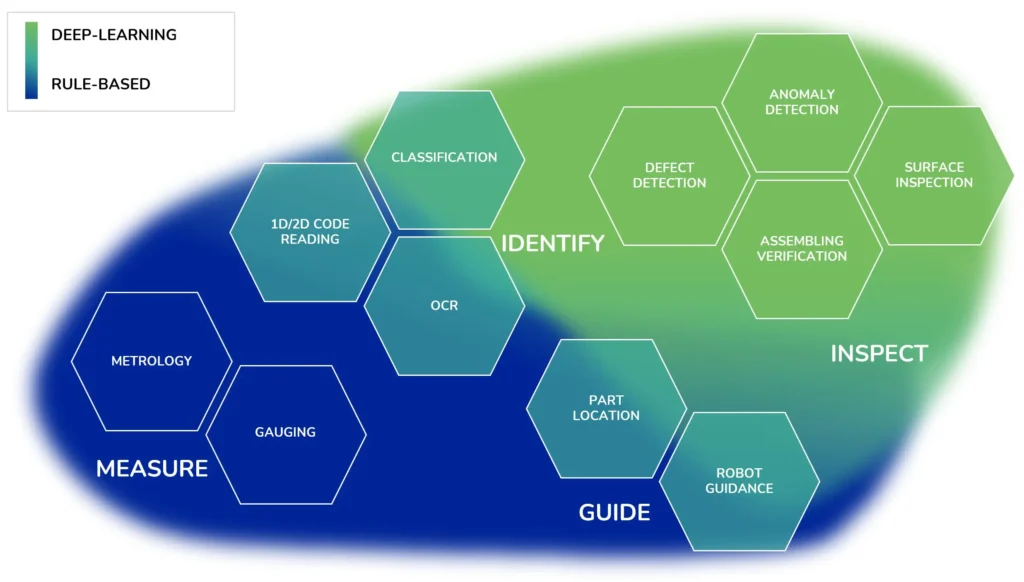

When should I use deep learning, and when is rule-based vision better?

Deep Learning Machine Vision

Deep learning is especially powerful when your products show natural variability — for example, meat cuts, baked goods, textiles, or castings. Traditional rule-based methods struggle in these cases because edges, contours, and shapes can differ from piece to piece. With deep learning, the system learns from examples and can still make consistent decisions, even if every product looks slightly different.

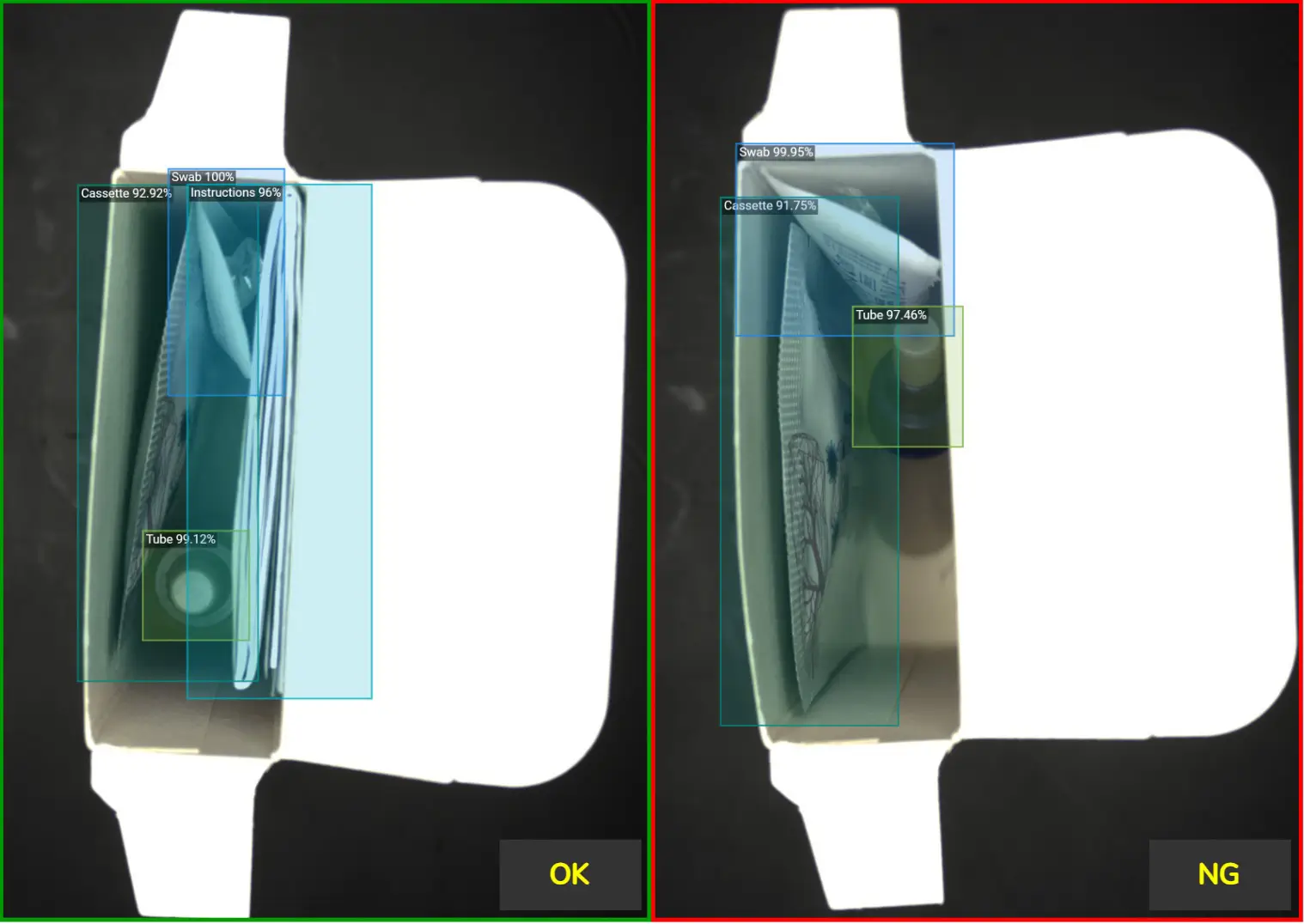

Example 1: Packaging Inspection. Verifying that a pharma package contains all required items — a test tube, swab, cassette, and instructions — would be nearly impossible with traditional rule-based machine vision. Why? Highly reflective foil on the cassette, items that are not perfectly aligned, variable positions inside the package, and irregular edges make it difficult to define fixed inspection rules. Deep learning, however, can learn the acceptable configurations from real examples and reliably verify completeness.

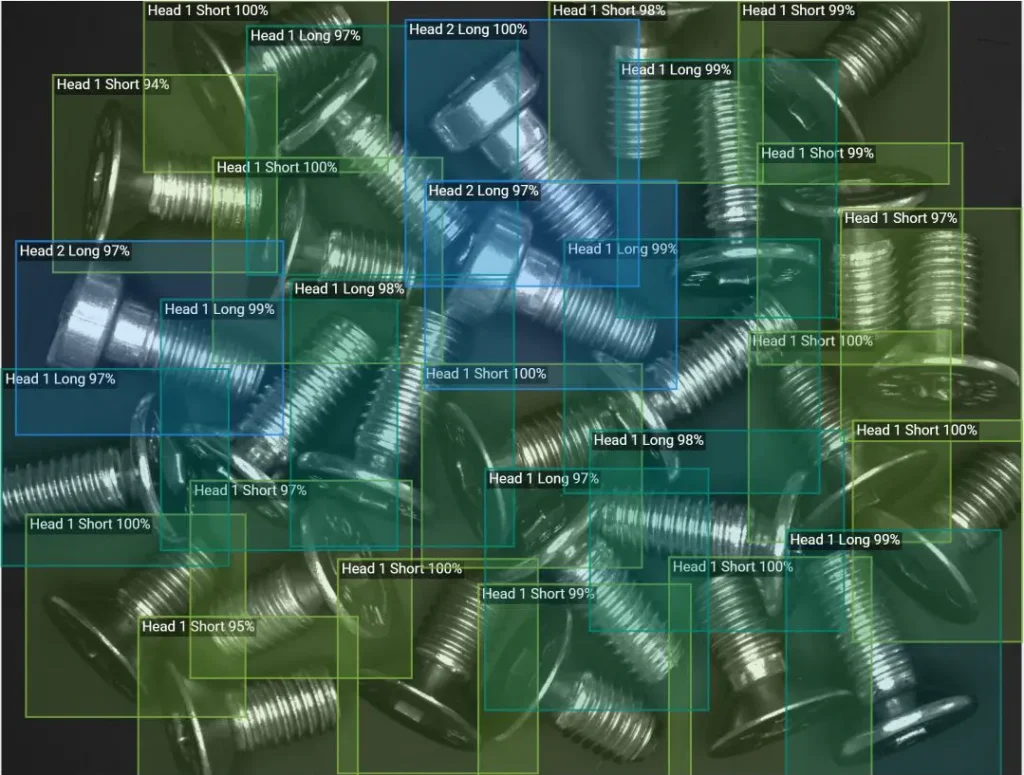

Example 2: Verifying the number and type of screws. A seemingly simple task like verifying the correct number and type of screws can become slow and error-prone — especially when the screws differ only slightly. With PEKAT VISION, a deep learning software, orientation, rotation, and placement do not matter. The software detects, classifies, and (if needed) counts each screw instantly — even when dozens are scattered in different directions.

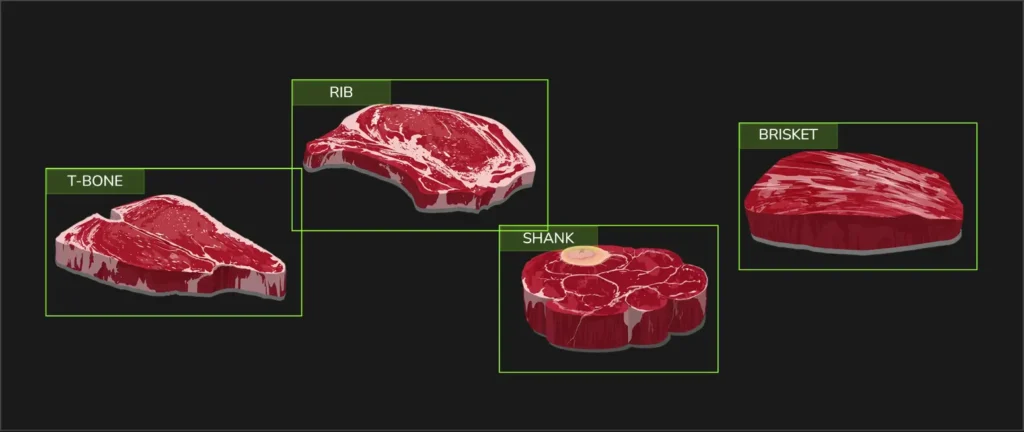

Example 3: Meat cut classification. How do you train a machine to recognize meat cuts when every single piece is different? In the food industry, no two pieces of meat are identical. Shape, size, texture, and even color can vary. That’s why traditional rule-based vision methods — which rely on fixed features such as edges or contours — struggle with this level of natural variability. Deep learning models, on the other hand, learn from representative examples and can generalize to new, slightly different pieces.

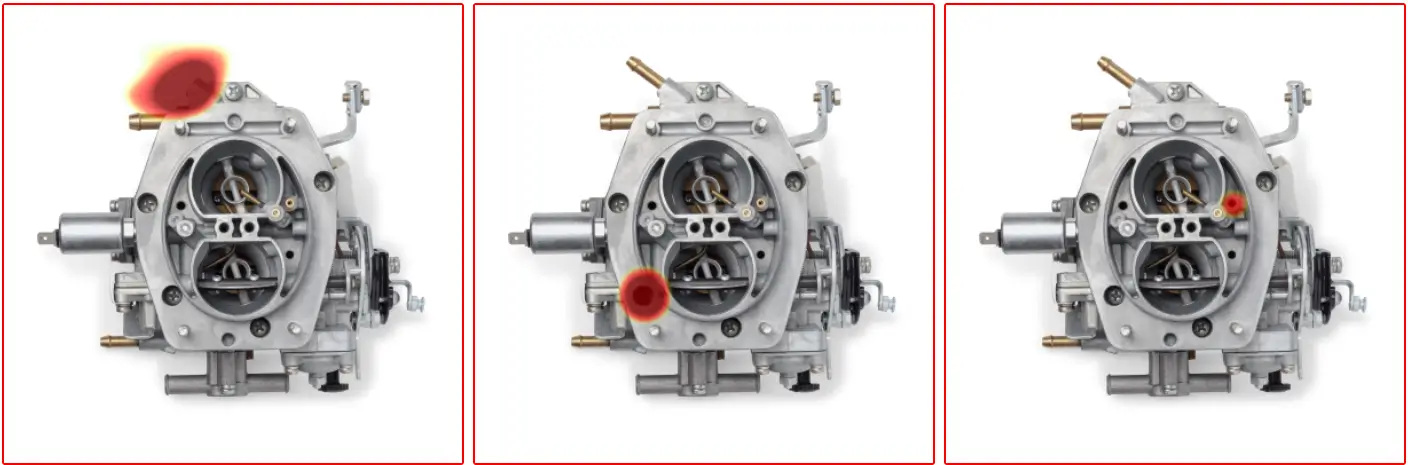

Anomaly detection: When defects are rare or unpredictable.

Another powerful deep learning approach is anomaly detection. Instead of training the system on examples of every possible defect, you train it only on good products. The software learns what “normal” looks like — and flags anything that deviates from this learned standard.

This is particularly valuable when defects are rare, previously unseen, or difficult to define precisely. In many real-world applications, collecting enough defective samples for traditional supervised training is either impractical or impossible. Anomaly detection eliminates the need for extensive defect annotation and still enables reliable inspection.

Example 4: Carburetor assembly. In one case, a small spring might be missing. In another, a screw may not be inserted. In yet another, a gasket could be absent or incorrectly positioned. Instead of trying to define every possible missing-part scenario, the system learns the correct, fully assembled carburetor and automatically highlights any deviation — even if that specific defect has never been seen before.

Another key advantage of PEKAT VISION deep learning software is usability: practically anyone familiar with Windows can set up a deep learning inspection, label training images, and train a model. This makes AI-based inspection more accessible to operators or engineers without prior machine vision experience.

Rule-based Machine Vision

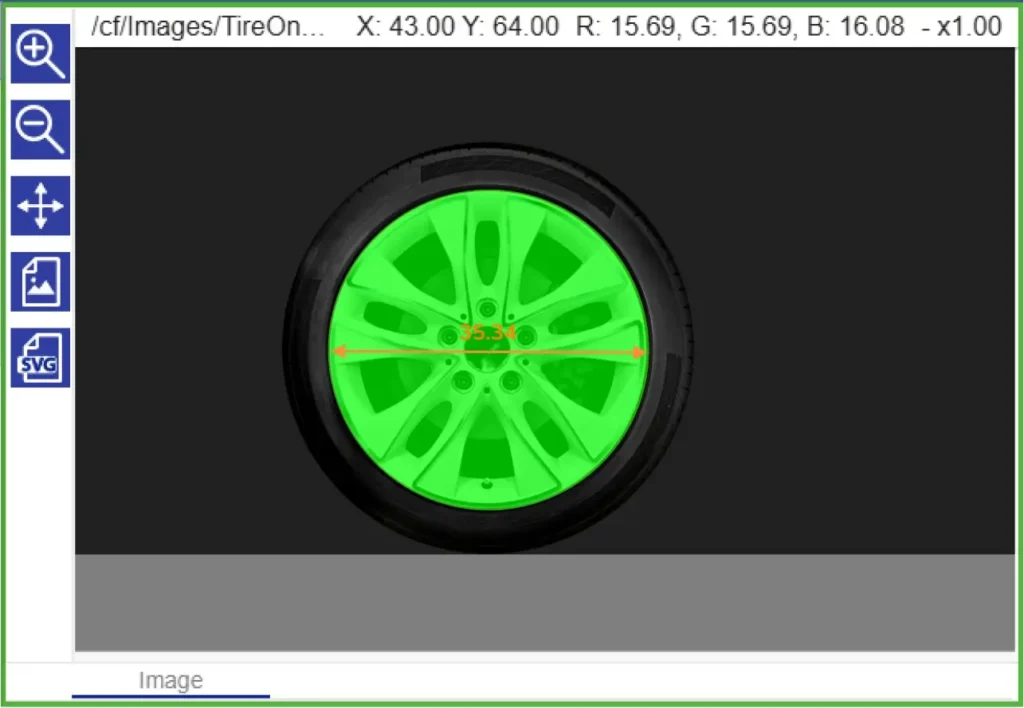

Rule-based vision, by contrast, shines when the task can be defined in precise, repeatable rules. If you need to measure a component, verify alignment, or read a barcode, a rule-based system will likely be faster, lighter, and easier to configure. It excels at applications where the product is consistent and the inspection criteria are well defined.

However, setting up these inspections generally requires at least some knowledge of machine vision principles — knowing how to configure edge detection, thresholding, pattern matching, or measuring tools. Even small changes in the product or environment can require manual adjustments.

Example 1: Tire Diameter Measurement. For applications requiring precise dimensional verification, rule-based vision is the optimal choice. In this example, the system measures the tire diameter using calibrated edge detection and measurement tools, ensuring repeatable and highly accurate results. When tolerances are clearly defined and the geometry is consistent, deterministic measurement algorithms outperform deep learning in speed, simplicity, and reliability.

Example 2: Wire Color Order Verification When inspection criteria are strictly defined — such as verifying the correct sequence of colored wires — rule-based vision provides fast and dependable results. Using color analysis and positional logic, the system checks whether each wire appears in the correct order. For structured, rule-driven tasks like this, traditional machine vision is efficient, transparent, and easy to validate.

The key is not to see one method as replacing the other, but to choose the right tool for the right job — and sometimes combine them within the same application.

For more details, you can also explore our earlier article: Overview of Deep Learning vs. Rule-based Approaches.

What role does the IMPACT software play in machine vision?

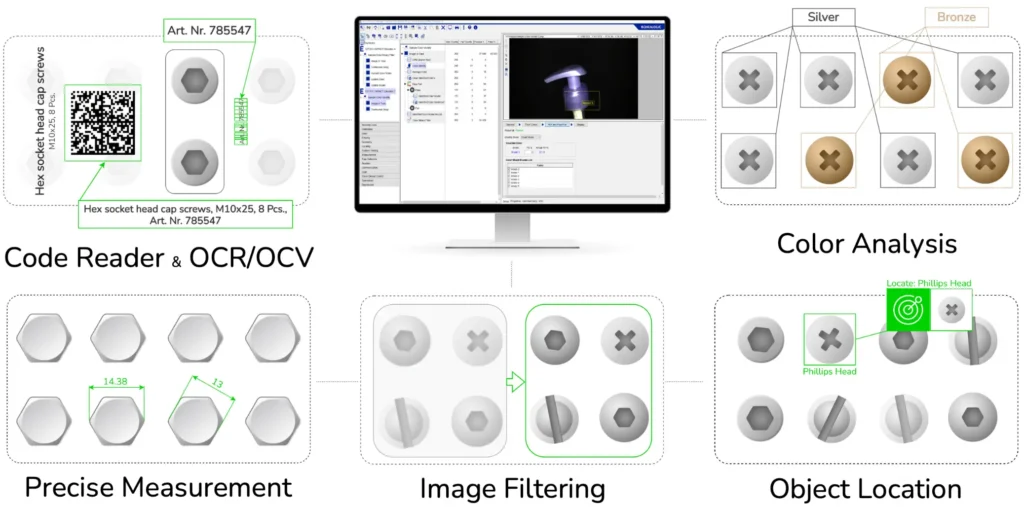

IMPACT is Datalogic’s rule-based machine vision software. It provides a library of predefined tools for measurement, code reading, alignment, pattern matching, and more. This makes it highly effective for applications where consistency and exact rules matter — like verifying dimensions, checking that a component is correctly positioned, or guiding a robot to a precise pick point.

Unlike deep learning software, IMPACT doesn’t “learn” from examples but instead follows explicit logic. That can be a limitation when dealing with natural variations, but it’s a huge advantage in tasks that demand deterministic, repeatable results. Customers may use IMPACT in combination with PEKAT VISION, depending on the task — see our next question.

Can deep learning and rule-based vision work together?

Yes — and in many cases, combining them delivers the most powerful solution.

For example, a deep learning model can first classify different product types, after which rule-based tools measure specific features or verify tolerances. In other scenarios, rule-based vision handles precise part localization and robot guidance, while deep learning performs the actual defect inspection.

Example: 🎥 360° Surface Quality Inspection

In the video below, a P2x Smart Camera running IMPACT software is used for accurate part localization and robot guidance. At the same time, PEKAT VISION deep learning software performs the surface quality analysis.

The deep learning model is trained to detect significant surface scratches while intentionally ignoring minor cosmetic marks that are considered acceptable. This ensures reliable defect detection without over-rejecting good parts.

This combination approach allows manufacturers to leverage deterministic rule-based precision together with the adaptability of deep learning — all within one integrated solution.

The MX-G2000 Vision Processor makes this possible on a single device, running both PEKAT VISION deep learning software and IMPACT rule-based software side by side.

How do smart cameras differ from vision processors?

Smart cameras combine optics, sensor, and processing power into one compact device. This all-in-one design makes them easy to deploy and perfect for distributed inspection points — for example, checking completeness of packaging directly on the line, or classifying parts before sorting.

Vision processors, on the other hand, provide more computing power and scalability. They can connect to multiple cameras at once, handle higher resolutions, and run complex or parallel inspections that go beyond the capability of a single smart camera. Processors are often the choice when you need centralized control or want to integrate several inspections into one system.

In practice, the P-Series Smart Cameras are best when you need a compact, cost-effective solution for specific points in production. Vision processors such as the MX-E or MX-G2000 are the right fit when flexibility, performance, or multi-camera setups are required.

What about the Smart VS?

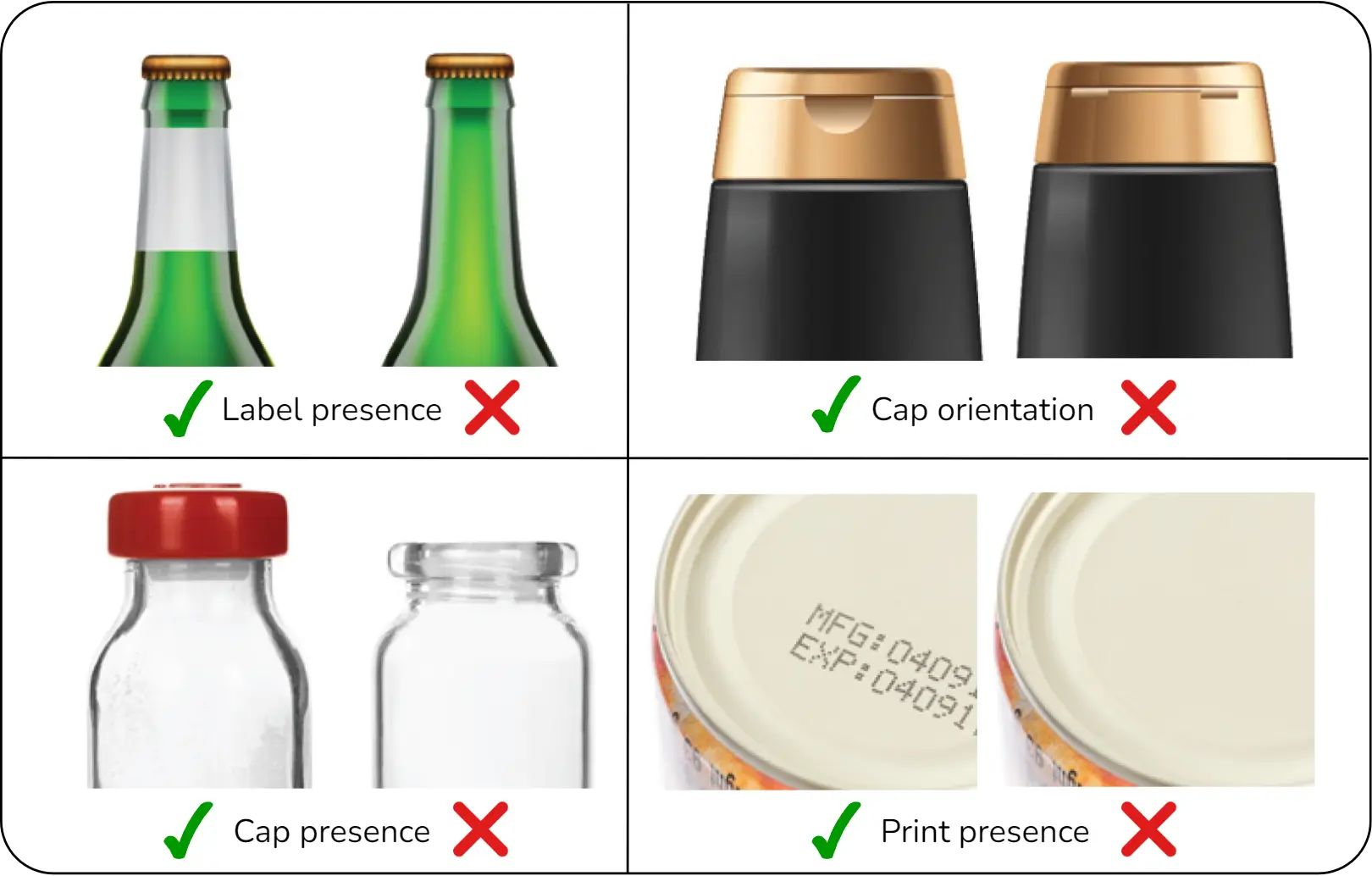

The Smart VS sensors are designed to be extremely simple to configure. They use deep learning for straightforward inspection tasks such as presence/absence checks or sorting. This makes them one of the most user-friendly ways to introduce AI-based inspection, especially in packaging and labeling lines.

They deliver these results at very high speed with deterministic response times, but their scope of use is limited. For example, the nominal sensing distance is 100–400 mm, and the number of training images is capped (up to 50 for the Smart VS EVO).

What’s the difference between area-scan and line-scan cameras?

Area-scan cameras capture an image in a single frame, which makes them highly versatile. They’re often used for inspecting parts on assembly lines, packaging checks, or label verification. With proper lighting and exposure control, they can also handle fast-moving objects — for example, bottles or cans in a high-speed bottling plant.

Importantly, an area-scan camera doesn’t need to capture the entire product at once. You can define a specific region of interest and analyze only that area, which keeps processing efficient and focused.

Line-scan cameras, by contrast, build an image line by line as the object moves past the sensor. This makes them the go-to choice for inspecting continuous materials such as textiles, paper, film, or metal sheets. They can cover very large objects or endless rolls with high precision, something area-scan cameras can’t handle efficiently.

The trade-off is in deployment. Area-scan cameras are easier to set up and generally require less complex lighting or motion control. Line-scan cameras offer unmatched accuracy for continuous processes, but they require precise synchronization with conveyor speed or material movement. If your application involves long, uninterrupted surfaces, line-scan is usually the right tool.

If you’d like to see an example of how line-scan camera can be used in practice, check out our recent blog post on inspecting continuous material using line-scan cameras.

Which solution is right for me?

That depends on your inspection challenge:

- If your products vary in appearance, deep learning is usually the way to go.

- If your task can be described in clear, measurable rules, rule-based vision may be the better fit.

- If you need both flexibility and precision, consider combining the two.

Hardware also matters. A simple sensor like the Smart VS can handle easy presence/absence tasks, while a smart camera such as the P-Series provides more power for flexible inspections. For large-scale or multi-camera systems, a vision processor like the MX-E or MX-G2000 is often the best choice.

The important part: you don’t need to decide alone. With a complete machine vision portfolio — deep learning and rule-based software, sensors, smart cameras, processors, and industrial cameras — we can help you find the right combination for your application.